A study of ASPICE

What Developers Need to Do in ASPICE projects

Agenda

Common Misunderstandings About ASPICE

ASPICE is NOT:

A coding standard

That is not about MISRA-C, AUTOSAR...

A document template or design method

No required UML or template.

A specific tool requirement

Tools are your choice.

A testing framework

It does not mandate unit test tools.

A quality guarantee by itself

Process does not equal zero defects.

A certification for bug-free software

It is a process assessment.

A one-time audit preparation activity

It is continuous, every sprint and release.

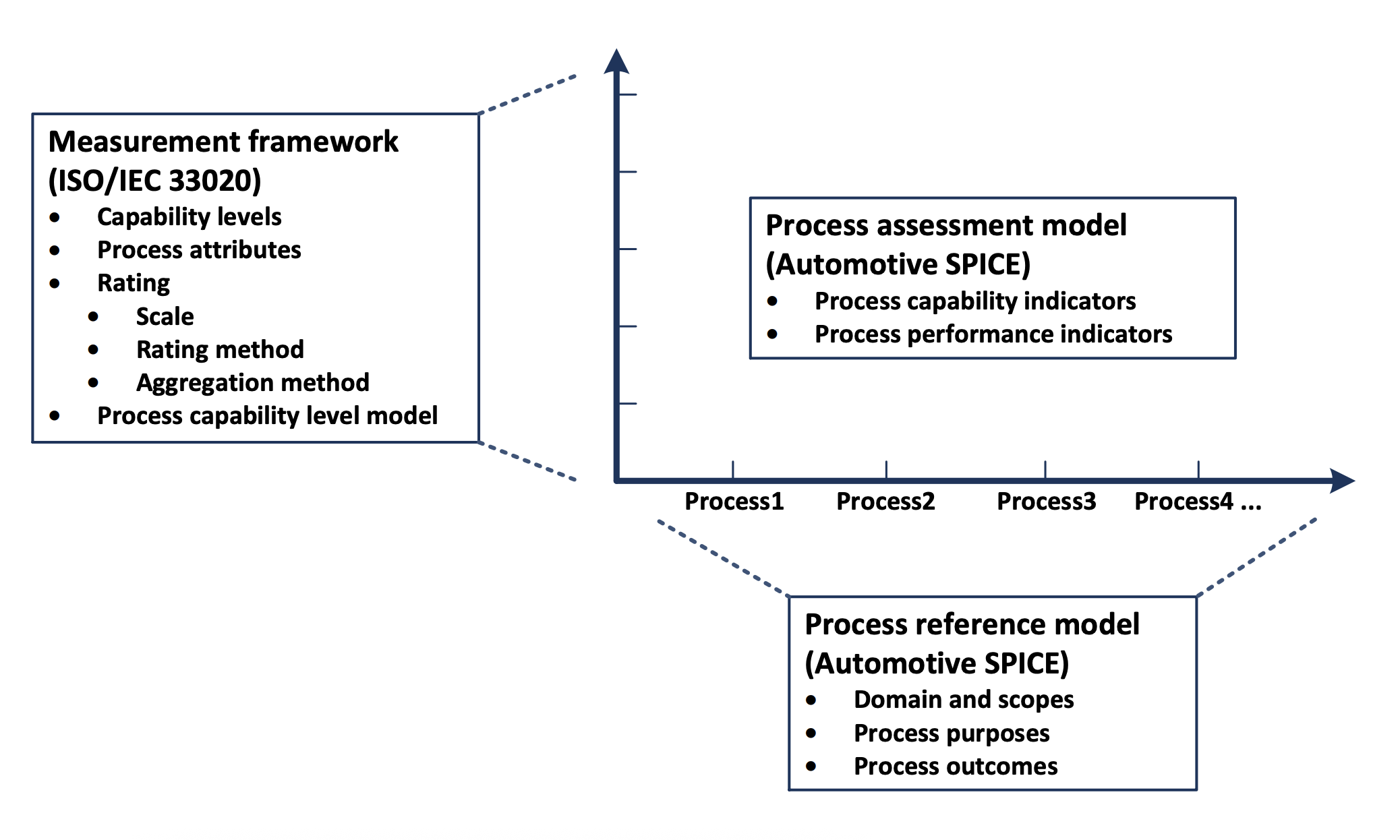

Process Assessment and SPICE

SPICE = Software Process Improvement and Capability Determination

Origin: ISO/IEC 15504 -> ISO/IEC 330xx series.

Key questions SPICE asks

- Can your team build software predictably?

- Do results happen consistently?

- Can the process be repeated and improved?

Assessment focus

Process capability, systematic evidence, predictability.

What ASPICE Really Is

Automotive-SPICE

Process assessment framework for Automotive Software.

Why Automotive SPICE exists

- Safety-critical software and long product lifecycles

- Complex supply chains (OEM -> Tier-1 -> Tier-2)

- Need for a shared assessment language

ASPICE = PRM + PAM

PRM defines what to do.

PAM rates how well you do it.

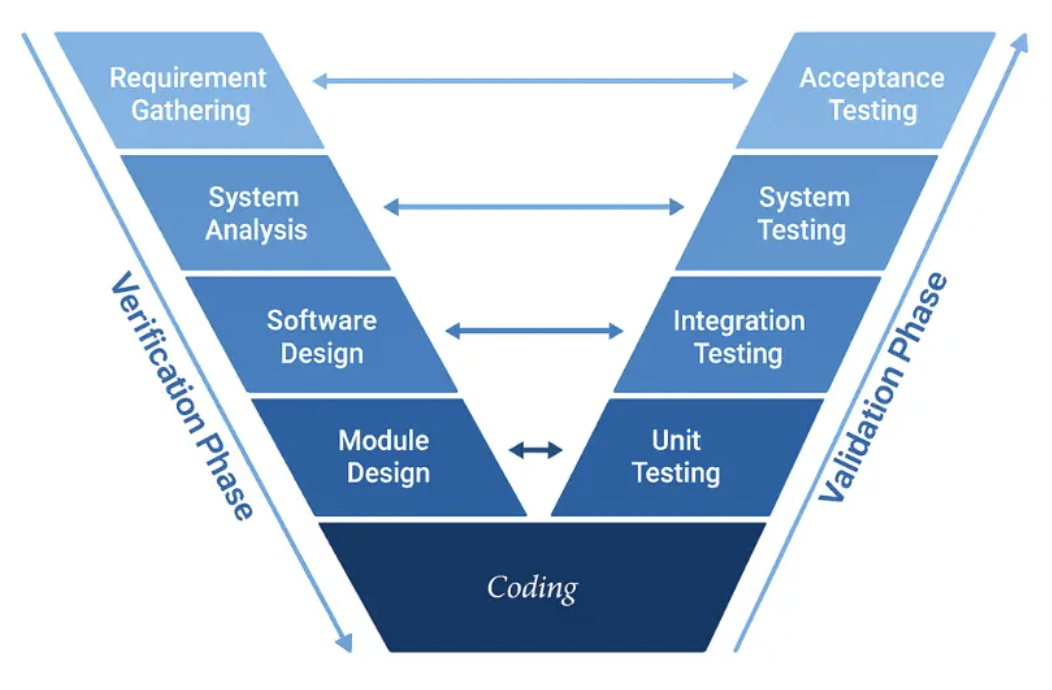

The V-Model Mindset

From Waterfall to V-Model

Development activities are paired with early test planning.

Left side: Development

- Requirements -> Design -> Implementation

Right side: Verification

- Unit Test <- Integration Test <- System Test

Key concept: Traceability

Each requirement -> design -> code -> test.

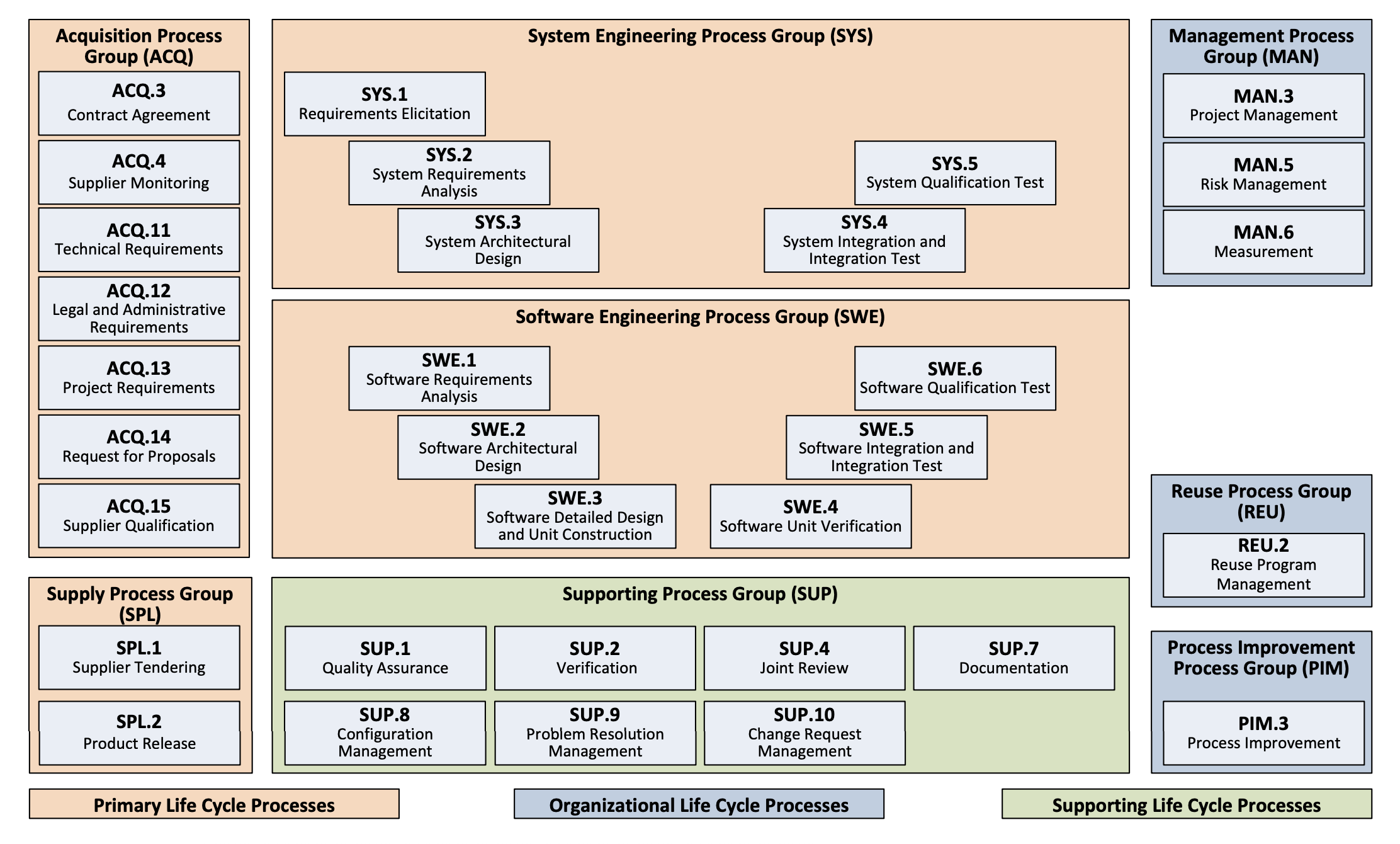

ASPICE Process Reference Model

Three process categories

Primary, Organizational, Supporting life cycle processes.

- Primary Life Cycle (ACQ, SPL, SYS, SWE)

- Organizational Life Cycle (MAN, PIM, REU)

- Supporting Life Cycle (SUP)

Heart of ASPICE

SYS + SWE implement the V-model step by step.

Each process has

- ID and Name

- Purpose

- Activities (Base Practices - BP)

- Outcomes (Work Products - WP)

What is a "System" in Automotive?

System != just software

It includes hardware, electronics, and integration.

System includes

- Software running on processors

- Hardware (chips, boards, sensors)

- ECUs (Electronic Control Units)

- Mechanical parts (housing, connectors)

- Communication networks (CAN, Ethernet, LIN)

- Power supply and actuators

SYS vs SWE

SYS is system-level (HW + SW); SWE is software-only.

SYS.1 - Requirements Elicitation

Purpose

Gather stakeholder needs and establish baseline.

Outcome

Agreed stakeholder requirements with change control.

Key activities

- Obtain requirements from stakeholders

- Ensure mutual understanding

- Get explicit agreement

- Establish formal baseline

- Manage changes with impact assessment

- Set up status tracking mechanism

SYS.2 - System Requirements Analysis

Purpose

Transform stakeholder needs into detailed system requirements.

Outcome

Detailed, traceable, testable system requirements.

Key activities

- Specify system requirements (functions and capabilities)

- Structure and prioritize them

- Analyze for correctness, feasibility, verifiability

- Analyze impact on environment and interfaces

- Develop verification criteria

- Establish bidirectional traceability (stakeholder requirements)

- Ensure consistency

SYS.3 - System Architectural Design

Purpose

Define system architecture and allocate requirements.

Outcome

System architecture with allocated requirements and defined interfaces.

Key activities

- Develop system architectural design (HW + SW elements)

- Allocate system requirements to elements

- Define interfaces between elements

- Evaluate dynamic behavior and interactions

- Evaluate alternative architectures

- Establish bidirectional traceability (system requirements)

- Ensure consistency

SYS.4 - System Integration and Integration Test

Purpose

Integrate system items and verify architectural design.

Outcome

Integrated system verified against architectural design.

Key activities

- Develop integration strategy (sequence of integration)

- Develop integration test strategy (including regression)

- Create test cases proving compliance with architecture

- Integrate items step by step

- Select and execute test cases

- Establish bidirectional traceability: architecture -> test cases -> results

- Ensure consistency

SYS.5 - System Qualification Test

Purpose

Verify complete system against system requirements.

Outcome

System ready for delivery, verified against all requirements.

Key activities

- Develop qualification test strategy (including regression)

- Create test cases proving compliance with system requirements

- Select and execute test cases

- Record test results and logs

- Establish bidirectional traceability: requirements -> test cases -> results

- Ensure consistency

SWE.1 - Software Requirements Analysis

Purpose

Transform system requirements into software requirements.

Outcome

Detailed, traceable, testable software requirements.

Key activities

- Specify software requirements from system requirements

- Structure and prioritize them

- Analyze for correctness, feasibility, verifiability

- Analyze impact on operating environment

- Develop verification criteria

- Establish bidirectional traceability (system requirements and architecture)

- Ensure consistency

SWE.2 - Software Architectural Design

Purpose

Define software architecture and allocate requirements.

Outcome

Software architecture with allocated requirements and interfaces.

Key activities

- Develop software architectural design (modules, components)

- Allocate software requirements to elements

- Define interfaces between software elements

- Evaluate timing and dynamic interactions

- Document resource consumption (memory, CPU)

- Evaluate alternative architectures

- Establish bidirectional traceability (software requirements)

- Ensure consistency

SWE.3 - Software Detailed Design and Unit Construction

Purpose

Design software units and implement them.

Outcome

Detailed design plus implemented and documented units.

Key activities

- Develop detailed design for each component (functions, classes)

- Specify interfaces of each unit

- Evaluate dynamic behavior and unit interactions

- Evaluate design quality (complexity, testability, risks)

- Establish bidirectional traceability: requirements -> units

- Ensure consistency

- Develop and document executable code

SWE.4 - Software Unit Verification

Purpose

Verify units against detailed design and requirements.

Outcome

Verified units with evidence of compliance.

Key activities

- Develop unit verification strategy (testing and static analysis)

- Define verification criteria (test cases, coverage, coding standards)

- Perform static verification (code reviews, static analysis)

- Execute unit tests and record results

- Establish bidirectional traceability: units -> design -> test cases -> results

- Ensure consistency

SWE.5 - Software Integration and Integration Test

Purpose

Integrate units into complete software and verify architecture.

Outcome

Integrated software verified against architectural design.

Key activities

- Develop integration strategy (sequence of integration)

- Develop integration test strategy (including regression)

- Create test cases proving compliance with software architecture

- Integrate units step by step

- Select and execute test cases

- Establish bidirectional traceability: architecture -> test cases -> results

- Ensure consistency

SWE.6 - Software Qualification Test

Purpose

Verify complete software against software requirements.

Outcome

Software ready for system integration, verified against all requirements.

Key activities

- Develop qualification test strategy (including regression)

- Create test cases proving compliance with software requirements

- Select and execute test cases

- Record test results and logs

- Establish bidirectional traceability: requirements -> test cases -> results

- Ensure consistency

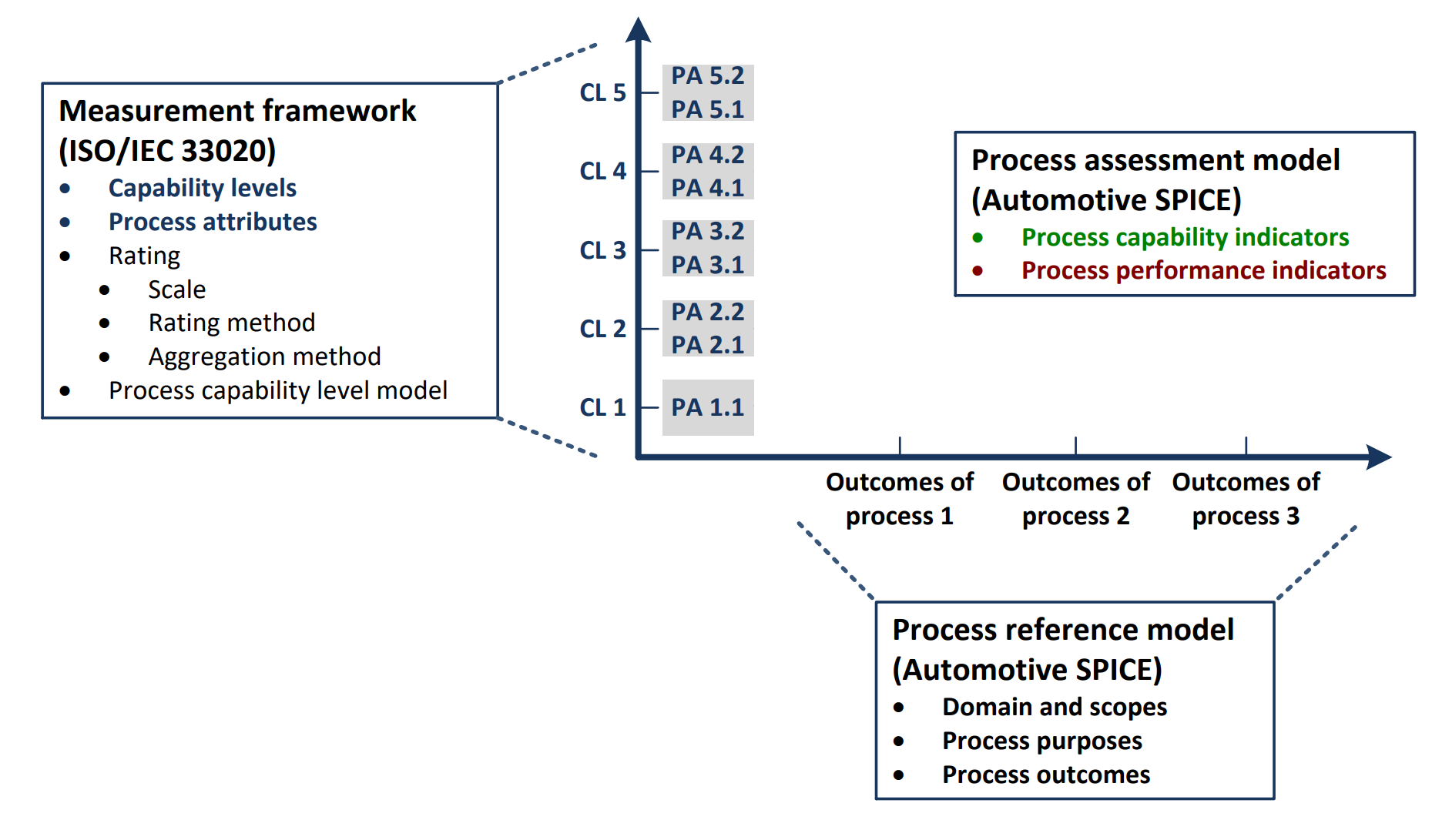

The Process Assessment Model (PAM)

- PAM is the measurement framework for process capability.

- It defines capability levels and Process Attributes (PA).

- Assessments rate achievement and determine the capability level.

- Automotive projects typically target Level 2 or 3.

Capability Levels (0 to 5)

| Level | Description |

|---|---|

| Level 0: Incomplete process | Not implemented or fails; little or no systematic achievement. |

| Level 1: Performed process | Process achieves its purpose. |

| Level 2: Managed process | Planned, monitored, and adjusted; work products controlled. |

| Level 3: Established process | Defined process in use; capable of achieving outcomes. |

| Level 4: Predictable process | Operates within defined limits; measured and quantitatively controlled. |

| Level 5: Innovating process | Continuously improved to meet organizational change. |

Capability Levels and Process Attributes

| Capability Level | Attribute ID | Process Attributes |

|---|---|---|

| Level 0: Incomplete process | - | - |

| Level 1: Performed process | PA 1.1 | Process performance process attribute |

| Level 2: Managed process | PA 2.1 | Performance management process attribute |

| PA 2.2 | Work product management process attribute | |

| Level 3: Established process | PA 3.1 | Process definition process attribute |

| PA 3.2 | Process deployment process attribute | |

| Level 4: Predictable process | PA 4.1 | Quantitative analysis process attribute |

| PA 4.2 | Quantitative control process attribute | |

| Level 5: Innovating process | PA 5.1 | Process innovation process attribute |

| PA 5.2 | Process innovation implementation process attribute |

Rating Scale and Achievement Range

| Rating | Achievement Level | Description | Achievement Range |

|---|---|---|---|

| N | Not achieved | There is little or no evidence of achievement of the defined process attribute in the assessed process. | 0% to 15% |

| P | Partially achieved | There is some evidence of an approach to, and some achievement of, the defined process attribute in the assessed process. Some aspects of achievement of the process attribute may be unpredictable. | >15% to 50% |

| L | Largely achieved | There is evidence of a systematic approach to, and significant achievement of, the defined process attribute in the assessed process. Some weaknesses related to this process attribute may exist in the assessed process. | >50% to 85% |

| F | Fully achieved | There is evidence of a complete and systematic approach to, and full achievement of, the defined process attribute in the assessed process. No significant weaknesses related to this process attribute exist in the assessed process. | >85% to 100% |

Process Capability Level Model

| Capability Level | Process Attributes (PA) | Required Rating |

|---|---|---|

| Level 1 – Performed | PA 1.1: Process Performance | Largely |

| Level 2 – Managed | PA 1.1: Process Performance | Fully |

| PA 2.1: Performance Management | Largely | |

| PA 2.2: Work Product Management | Largely | |

| Level 3 – Established | PA 1.1: Process Performance | Fully |

| PA 2.1: Performance Management | Fully | |

| PA 2.2: Work Product Management | Fully | |

| PA 3.1: Process Definition | Largely | |

| PA 3.2: Process Deployment | Largely | |

| Level 4 – Predictable | PA 1.1: Process Performance | Fully |

| PA 2.1: Performance Management | Fully | |

| PA 2.2: Work Product Management | Fully | |

| PA 3.1: Process Definition | Fully | |

| PA 3.2: Process Deployment | Fully | |

| PA 4.1: Quantitative Analysis | Largely | |

| PA 4.2: Quantitative Control | Largely | |

| Level 5 – Innovating | PA 1.1: Process Performance | Fully |

| PA 2.1: Performance Management | Fully | |

| PA 2.2: Work Product Management | Fully | |

| PA 3.1: Process Definition | Fully | |

| PA 3.2: Process Deployment | Fully | |

| PA 4.1: Quantitative Analysis | Fully | |

| PA 4.2: Quantitative Control | Fully | |

| PA 5.1: Process Innovation | Largely | |

| PA 5.2: Process Innovation Implementation | Largely |

- Largely or Fully achieved means the PA rating is at least Largely.

- Higher levels require all lower-level PAs to be Fully achieved.

Traceability - What and Why

- Traceability = links between work products

- Bidirectional traceability:

- Downstream (forward): Requirements -> Design -> Code -> Tests

- Upstream (backward): Tests -> Code -> Design -> Requirements

Downstream (forward)

Requirements -> Design -> Code -> Tests

Upstream (backward)

Tests -> Code -> Design -> Requirements

- Why it matters:

- Completeness: everything is implemented and tested

- Necessity: no undefined extra code

- Impact analysis: know what to fix when requirements change

- Answers: "Where is this implemented? Which test verifies it?"

Consistency - More Than Just Links

- Consistency = content and meaning match

- Traceability != Consistency

Traceability

"A is linked to B"

Consistency

"A and B actually say the same thing"

- Example:

- Requirement: "Alert on GNSS fix loss"

- Design: "Display alert when fix lost"

- Design: "Alert when TTF > expected" (inconsistent)

- Every process checks both:

- "Establish bidirectional traceability"

- "Ensure consistency"

Practical Example - Traceability Matrix

- Example: GNSS software module

- Traceability Matrix:

| Requirement/Test | TC-010 (Nominal) | TC-011 (UTC) | TC-012 (Fix-loss) | TC-013 (Rate) |

|---|---|---|---|---|

| SWR-001 (pos @1Hz) | X | X | ||

| SWR-002 (UTC <= 1s) | X | X | ||

| SWR-003 (NO_FIX) | X |

- Many-to-many relationships are OK

- One test can verify multiple requirements

- One requirement can have multiple tests

Key Takeaways for Developers

- ASPICE is about how we work, not what tools we use

- V-model ensures early test planning and traceability

- SYS processes: complete system (HW + SW)

- SWE processes: software only

- Left side of V: development (requirements -> design -> code)

- Right side of V: verification (tests proving compliance)

- Traceability: links between work products

- Consistency: content actually matches

- Process capability: levels show process maturity

- Most important: do it continuously, not just before audits

Q&A / Discussion

- Questions?

- Common questions:

- How does Agile/Scrum fit with ASPICE?

- What is the difference between system and software requirements?

- How do we handle traceability in practice?

- What tools should we use?

- How do we prepare for assessments?